The Chat GPT architecture is based on an adapter network, a type of neural network that was introduced in a 2017 paper by Google researchers. The adapter network is designed to process sequential data such as text, and uses self-attention mechanisms to assess the importance of different parts of the input when making predictions.

This article will cover the following topics:

- Introduction Of ChatGPT

- The technical Principles of ChatGPT

- Whether ChatGPT can replace traditional search engines like Google

Introduction Of ChatGPT

As an intelligent dialog system, ChatGPT has recently gained popularity, generating a lot of buzz in the tech community and inspiring many to share ChatGPT-related content and test examples online. The results are impressive. The last time I can remember an AI technology causing such a sensation was when GPT-3 was released in the field of NLP, over two and a half years ago.

Back then, the heyday of artificial intelligence was in full swing, but today it feels like a distant memory. In the multimodal domain, models such as DaLL E2 and Stable Diffusion represented the diffusion model, which has been popular in the past half a year with AIGC models.

Today, the AI torch has passed to Chat GPT, which certainly belongs in the AIGC category. So in the current AI low period after the bubble burst, AIGC is indeed a lifeline for AI.

Of course, we are looking forward to the GPT-4 being released soon, and we hope that OpenAI can continue to support the industry and bring a little warmth.

Let’s not dwell on examples of ChatGPT’s capabilities, as they are everywhere online. Instead, let’s talk about the technology behind ChatGPT and how it achieves such extraordinary results. Since ChatGPT is so powerful, can it replace existing search engines like Google? If so, why? If not, why not?

In this article, I will try to answer these questions from my own understanding. Please note that some of my opinions may be biased and should be taken with a grain of salt. Let’s first see what ChatGPT has done to achieve such good results.

The Technical Principles of ChatGPT

In terms of overall technology, ChatGPT is based on the powerful GPT-3.5 Large Language Model (LLM) and introduces “reinforcement learning + human-annotated data” (RLHF) to continuously refine the pre-trained language model.

The main goal is to allow the LLM to understand the meaning of human commands (such as writing a short essay, generating answers to knowledge questions, brainstorming different types of questions, etc.) and to judge which answers are of high quality for a given message. (user question) based on multiple criteria (such as being informative, rich in content, useful to the user, harmless, and free of discriminatory information).

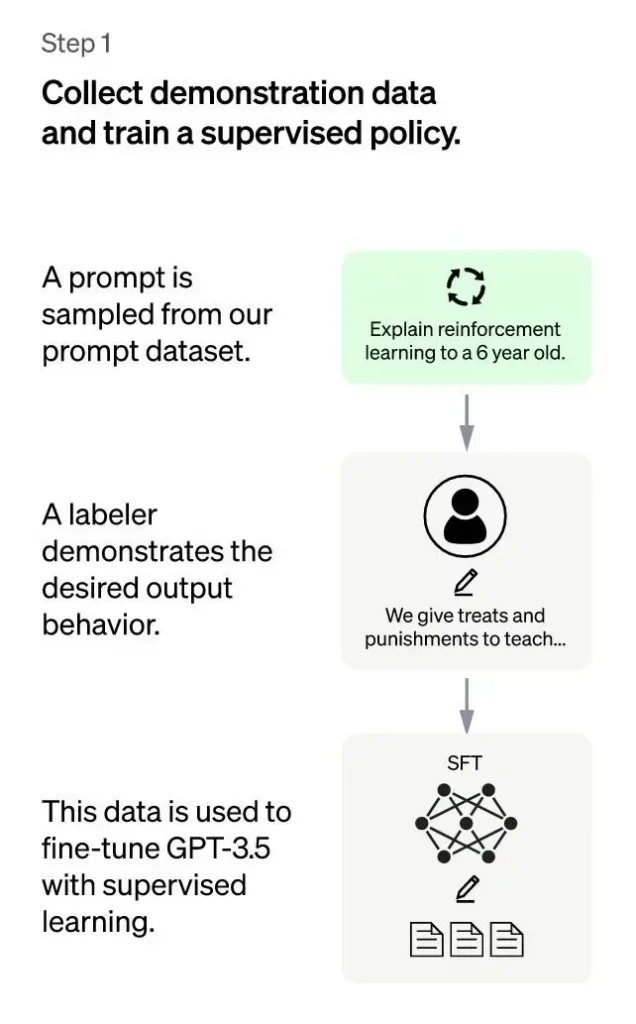

Under the framework of “human-annotated data + reinforcement learning”, the ChatGPT training process can be divided into the following three stages:

ChatGPT: First Stage

The first stage is a supervised policy model during the cold start phase. Although GPT-3.5 is strong, it is difficult to understand the different intentions behind the different types of human commands and to judge whether the generated content is of high quality.

To give GPT-3.5 a preliminary understanding of the intentions behind the commands, a batch of prompts (i.e., commands or questions) submitted by test users will be randomly selected and professionally annotated to provide high-quality responses for questions. specified indications. These manually entered data will be used to fit the GPT-3.5 model.

Through this process, we can consider that GPT-3.5 has initially acquired the ability to understand the intents contained in human prompts and provide relatively high-quality responses based on these intents. However, this is not enough.

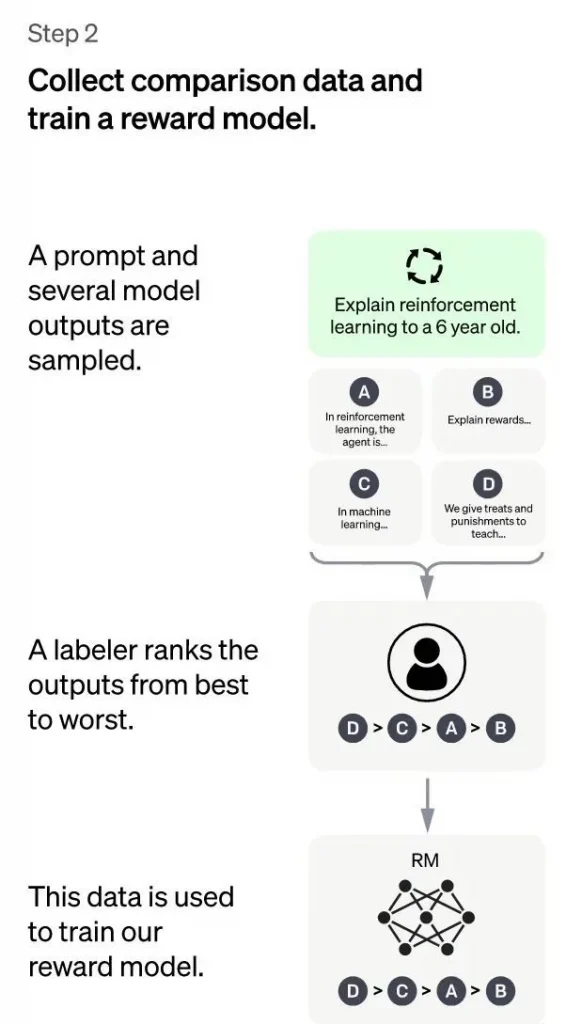

ChatGPT: Second Stage

The main goal of the second stage is to train a reward model (RM) using manually annotated training data. This stage consists of randomly sampling a batch of requests submitted by the user (which are mostly the same as the first stage), using the cold start model installed in the first stage to generate K different responses for each request. . . As a result, the mode produces an annotator this annotator then ranks the K results based on various criteria (such as relevance, information, dangerousness, etc.) and provides the rank order of the K results, which is the manually entered data for this stage.

Next, we’ll use the ordered data to train a reward model using the common peer-to-peer learning method for ranking. For results ordered by K, we combine them two by two to form $\binom{k}{2}$ pairs of training data. ChatGPT uses a pairwise loss to train the reward model. ThM model the AC generator and generates a score that assesses the quality of the response. For a training data pair, we assume that answer 1 comes before answer 2 in manual classification, so the loss function encourages the RM model to score higher than.

Read Also: What is ChatGPT Pro?

In summary, in this phase, the supervised policy model generates K resclotheorthe each request after the cold start. The results are manually ranked in descending order of quality and used as training data to train the reward model using the learning pairwise ranking method. For the trained RM model, the input is and the output is the quality score of the result. The higher the score, the higher the quality of the response generated.

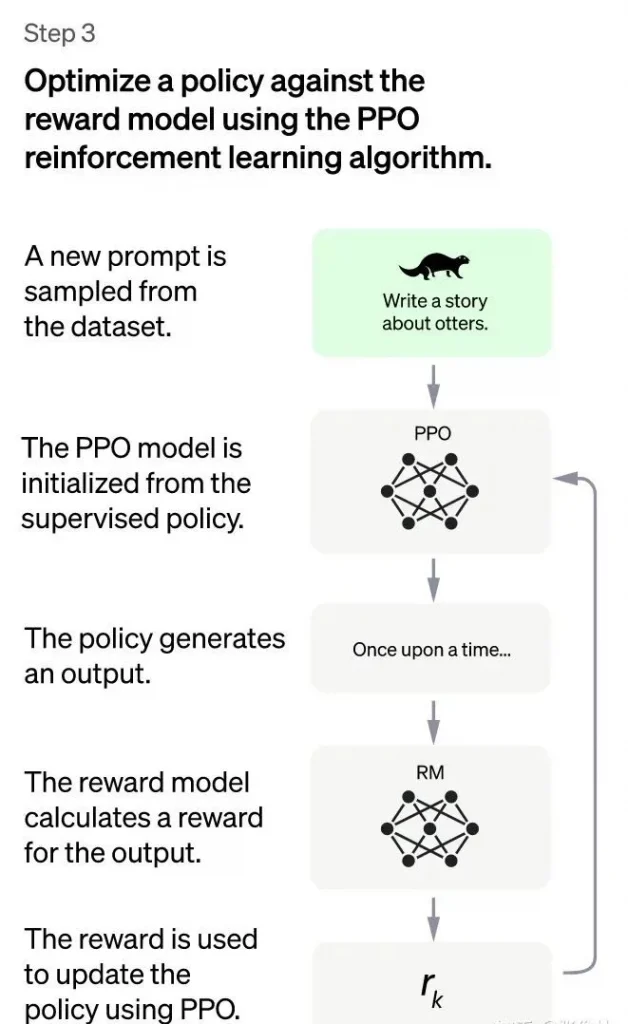

ChatGPT: Third stage

In the third phase of ChatGPT, reinforcement learning is used to improvpre-trainedbility of the pretrained model. In this phase, manual annotation data is not needed, but the RM model trained in the previous phase is used to update the parameters of the previously trained model based on the RM scoring results.

Specifically, a batch of new commands is randomly sampled from the prompts sent by the user (which are different from the first and second phases), and the cold start mode initializes the parameters of the PPO model. Then, for the randomly selected prompts, the PPO model generates responses and the RM model trained in the previous phase provides a reward score to assess the quality of the responses.

This reward score is the overall reward given by the RM to the complete response (consisting of a sequence of words). With the final reward of the word sequence, each word can be considered as a time step and the reward is transmitted from back to front, generating a policy gradient that can update the parameters of the PPO model.

This is the standard reinforcement learning process, the goal of which is to train the LLM to produce high-reward responses that meet RM standards, i.e., high-quality responses.

If we continue to iterate through the second and third phases, it is obvious that each iteration will make the LLM model more and more capable. Because the second phase improves the capability of the RM model through manually annotated data, and in the third phase, the improved RM model will score responses to new prompts more accurately and use reinforcement learning to animate the LLM model. to learn new high points. -quality content, which plays a similar role to the use of pseudo-tags to expand high-quality training data, thus the LLM model is further improved.

Obviously, the second phase and the third phase have a mutual promotion effect, so continuous iteration will have a sustained improvement effect.

Despite this, I don’t think the use of reinforcement learning in the third phase is the main reason why the ChatGPT model works particularly well. Suppose the third phase does not use reinforcement learning, but instead uses the following method: Similar to the second phase, for a new indicator, the cold start model can generate k responses, which are scored by the model.

RM Respectively, and we chose the response with the highest score to form new training data to refine the LLM model. Assuming this mode is used, I think its effect can be comparable to reinforcement learning, although it is not as sophisticated, the effect may not necessarily be much worse.

Regardless of the technical mode adopted in the third phase, it is essentially likely that the RM learned in the second phase will be used to expand the high-quality training data of the LLM model.

The above is the ChatGPT training process, which is mainly based on the role of the instructor. ChatGPT is improved instructed, and the points of improvement are mainly different in the annotated data collection method.

In other respects, including the structure of the model and the training process, it essentially follows instructions. It is foreseeable that this technology of reinforcement learning from human feedback will quickly spread to other directions of content generation, such as a very easy to think of, similar to “A machine translation model based on reinforcement learning from human feedback” and many others.

However, I personally think that adopting this technology in a specific field of NLP content generation may not be very meaningful, because ChatGPT itself can handle a wide variety of tasks, covering many sub-fields of NLP generation, so if a subfield of NLP adopts this technology again, it really isn’t of much value, because ChatGPT considers that its feasibility has been verified.

If this technology is applied to other fields of modal generation, such as images, audio, and video, it may be a direction worth exploring, and we may soon see similar work as “A Learning-Based XXX Diffusion Model.” by human reinforcement”. ” “. Feedback”, which should still be very significant.

The third phase of the ChatGPT training process is to use reinforcement learning to improve the capability of the previously trained model. In this phase, no human-labeled data is required, instead, the RM model trained in the previous phase is used to update the parameters of the previously trained model.

Whether ChatGPT Can Replace Traditional Search Engines Like Google

Can ChatGPT or a future version like GPT4 replace traditional search engines like Google? Personally, I think it’s not possible at the moment, but with some technical modifications, it could theoretically be possible to replace traditional search engines.

There are three main reasons why the current form of chatGPT cannot replace search engines: First, for many types of knowledge-related questions, chatGPT will provide answers that seem reasonable but are actually incorrect. ChatGPT’s answers seem well thought out and people like me who are not well-educated would easily believe them.

However, considering that it can answer many questions well, this would be confusing for users: if I don’t know the correct answer to the question I asked, should I trust the result of ChatGPT or not? At this point, no judgment can be made. This problem can be fatal.

Second, the current ChatGPT model, which is based on a large GPT model and trained more with annotated data, is not easy for LLM models to absorb new insights. New knowledge is constantly emerging and it is unrealistic to retrain the GPT model every time new knowledge appears, either in terms of training time or cost.

If we adopt a mode of fine-tuning for the new knowledge, it seems feasible and relatively low-cost, but it is easy to introduce new data and cause disastrous forgetting of the original knowledge, especially for short-term frequent fine-tuning, then makes this problem more serious.

Therefore, how integrating new knowledge in near real-time in LLM is a very challenging problem.

Third, the training cost and online inference cost of ChatGPT or GPT4 are too high, resulting in if you are faced with millions of requests from real search engine users, assuming the free strategy continues, OpenAI can’t support it, but if the charging strategy is adopted, it will greatly reduce the user base, if charging is a dilemma, of course, if the training cost can be greatly reduced, then the dilemma can be solved by itself. The above three reasons have led to ChatGPT being unable to replace traditional search engines today.

Can these problems be fixed? Actually, if we take the technical path of ChatGPT as the main framework and absorb some of the existing technical means used by other dialog systems, we can modify ChatGPT from a technical perspective. Except for the cost problem, the first two technical problems mentioned above can be solved well.

We only need to introduce the following abilities of the sparrow system into ChatGPT: the display of evidence of results generated based on retrieval results, and the adoption of retrieval mode for new insights introduced by the LaMDA system.

Then, the timely introduction of new knowledge and the verification of the credibility of the generated content are not major problems.

the bio information is nice , but am unable to log in

kindly, assist with the steps to follow